目录

1.达到的目的

2.思路

2.1.强化学习(RL Reinforcement Learing)

2.2.深度学习(卷积神经网络CNN)

3.踩过的坑

4.代码实现(python3.5)

5.运行结果与分析

#!/usr/bin/env python

from __future__ import print_function

import tensorflow as tf

import cv2

import sys

sys.path.append("game/")

try:

from . import wrapped_flappy_bird as game

except Exception:

import wrapped_flappy_bird as game

import random

import numpy as np

from collections import deque

'''

先观察一段时间(OBSERVE = 1000 不能过大),

获取state(连续的4帧) => 进入训练阶段(无上限)=> action

'''

GAME = 'bird' # the name of the game beingplayed for log files

ACTIONS = 2 # number of valid actions 往上 往下

GAMMA = 0.99 # decay rate of pastobservations

OBSERVE = 1000. # timesteps to observebefore training

EXPLORE = 3000000. # frames over which toanneal epsilon

FINAL_EPSILON = 0.0001 # final value ofepsilon 探索

INITIAL_EPSILON = 0.1 # starting value ofepsilon

REPLAY_MEMORY = 50000 # number of previoustransitions to remember

BATCH = 32 # size of minibatch

FRAME_PER_ACTION = 1

# GAME = 'bird' # the name of the gamebeing played for log files

# ACTIONS = 2 # number of valid actions

# GAMMA = 0.99 # decay rate of pastobservations

# OBSERVE = 100000. # timesteps to observebefore training

# EXPLORE = 2000000. # frames over which toanneal epsilon

# FINAL_EPSILON = 0.0001 # final value ofepsilon

# INITIAL_EPSILON = 0.0001 # starting valueof epsilon

# REPLAY_MEMORY = 50000 # number ofprevious transitions to remember

# BATCH = 32 # size of minibatch

# FRAME_PER_ACTION = 1

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev = 0.01)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.01, shape = shape)

return tf.Variable(initial)

# padding = ‘SAME’=> new_height = new_width = W / S (结果向上取整)

# padding = ‘VALID’=> new_height = new_width = (W – F 1) / S (结果向上取整)

def conv2d(x, W, stride):

return tf.nn.conv2d(x, W, strides = [1, stride, stride, 1], padding ="SAME")

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize = [1, 2, 2, 1], strides = [1, 2, 2, 1],padding = "SAME")

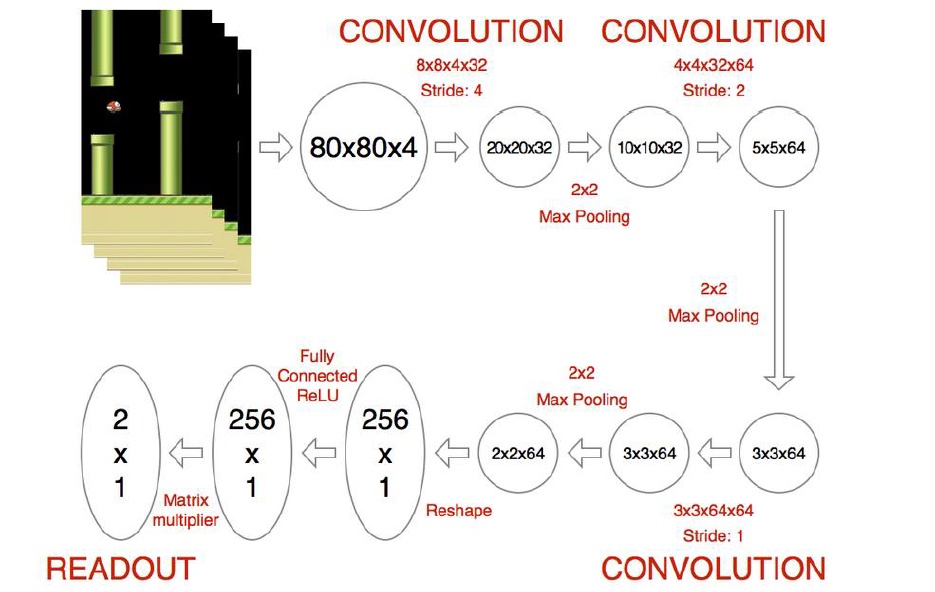

"""

数据流:80 * 80 * 4

conv1(8 * 8 * 4 * 32, Stride = 4) pool(Stride = 2)-> 10 * 10 * 32(height = width = 80/4 = 20/2 = 10)

conv2(4 * 4 * 32 * 64, Stride = 2) -> 5 * 5* 64 pool(Stride = 2)-> 3 * 3 * 64

conv3(3 * 3 * 64 * 64, Stride = 1) -> 3 * 3* 64 = 576

576 在定义h_conv3_flat变量大小时需要用到,以便进行FC全连接操作

"""

def createNetwork():

#network weights

W_conv1 = weight_variable([8, 8, 4, 32])

b_conv1 = bias_variable([32])

W_conv2 = weight_variable([4, 4, 32, 64])

b_conv2 = bias_variable([64])

W_conv3 = weight_variable([3, 3, 64, 64])

b_conv3 = bias_variable([64])

W_fc1 = weight_variable([576, 512])

b_fc1 = bias_variable([512])

#W_fc1 = weight_variable([1600, 512])

#b_fc1 = bias_variable([512])

W_fc2 = weight_variable([512, ACTIONS])

b_fc2 = bias_variable([ACTIONS])

#input layer

s= tf.placeholder("float", [None, 80, 80, 4])

#hidden layers

h_conv1 = tf.nn.relu(conv2d(s, W_conv1, 4) b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2, 2) b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

h_conv3 = tf.nn.relu(conv2d(h_conv2, W_conv3, 1) b_conv3)

h_pool3 = max_pool_2x2(h_conv3)

h_pool3_flat = tf.reshape(h_pool3, [-1, 576])

#h_conv3_flat = tf.reshape(h_conv3, [-1, 1600])

h_fc1 = tf.nn.relu(tf.matmul(h_pool3_flat, W_fc1) b_fc1)

#h_fc1 = tf.nn.relu(tf.matmul(h_conv3_flat, W_fc1) b_fc1)

#readout layer

readout= tf.matmul(h_fc1, W_fc2) b_fc2

return s, readout, h_fc1

def trainNetwork(s, readout, h_fc1, sess):

#define the cost function

a= tf.placeholder("float", [None, ACTIONS])

y= tf.placeholder("float", [None])

#reduction_indices = axis 0 : 列 1: 行

暂无评论